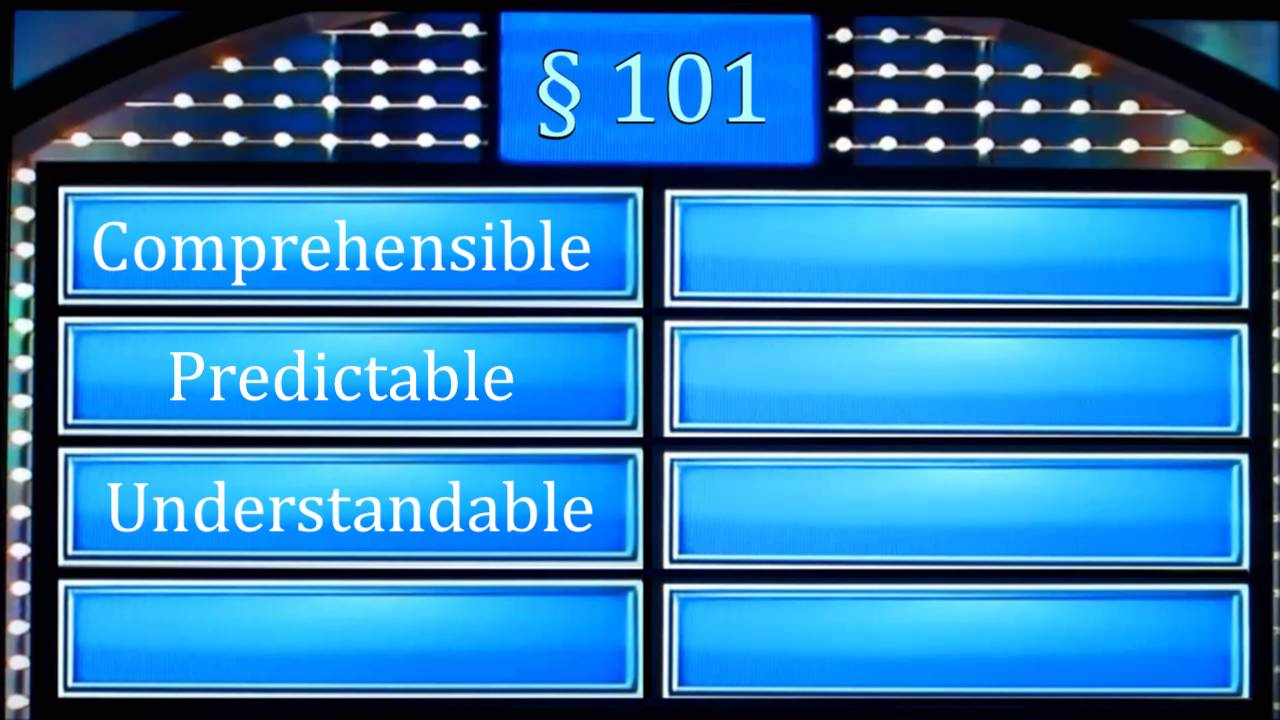

Critics of the Alice/Mayo framework for § 101 patentableEligible to be patented. To be patent-eligible, an invention must fall into the categories listed in 35 U.S.C. § 101 (i.e., process, machine, manufacture, or composition of matter) and cannot be an abstract idea or a law of nature. subject matter tend to claim that it’s impossible to understand or apply. Thanks to an enterprising 3L at Stanford, we now have survey evidence that the critics (and Judge Michel) are wrong. It’s time to play…. § 101 Feud!

From Various Parts, It’s… § 101 Critics

Critics of § 101 have used some lofty rhetoric to criticize § 101. It’s been called a crisis, foggy, unadministrable, and a noose around the necks of inventors. Personages as lofty as a former Federal CircuitSee CAFC judge have claimed it’s “too vague, too subjective, too unpredictable.”

But none of them have actually backed their assertions up with any data. For that, we’ll need to look somewhere else.

From Stanford Law School, It’s… Jason Reinecke!

A couple weeks ago, Jason Reinecke (the aforementioned Stanford Law student) posted an article summarizing his research.

First, Jason identified top intellectual property practitioners—lawyers recognized as the best at patent litigation, prosecutionPatent prosecution is the process of applying for a patent, along with any further proceedings before the USPTO., and counseling by their peers. Then he asked them to do one simple thing—read a software patentA generalized term referring to patents whose subject matter extends to computer-implemented code, which have been the subject of great controversy, including but not limited to how they interact with open source software. Although software patents are often denigrated, there is no accepted definition. However, there are a variety of methods for identifying software patents for empirical analysis. See Bessen, A<patent claimThe section of a patent that describes the legal scope of the invention. Patent claims are supposed to establish the boundaries of the patentee’s entitlement to exclude. Under peripheral claiming as practiced in the U.S., claims establish the outer bounds of the patentee's privilege to exclude others. For further reading, see Burk and Lemley, Signposts or Fence Posts. and decide if it’s patent-eligible or patent-ineligible under § 101. He surveyed 231 lawyers, drawn from both prosecutionPatent prosecution is the process of applying for a patent, along with any further proceedings before the USPTO. and litigation focuses.

Here’s the trick—each of the claims was from a recent district court case that reached a determination on patent eligibility. 56% of the claims were determined ineligible; 44% were determined eligible.[1. If you listened to § 101 critics, you’d assume that 99% of the decisions would have been that the patent was ineligible. That is clearly not the case.] That means Jason could compare the lawyer’s predictions to the district court’s determinations. If the lawyers get it right, well, that signals that the § 101 test is something practitioners can apply in a useful way.

Tell Me If This Patent ClaimThe section of a patent that describes the legal scope of the invention. Patent claims are supposed to establish the boundaries of the patentee’s entitlement to exclude. Under peripheral claiming as practiced in the U.S., claims establish the outer bounds of the patentee's privilege to exclude others. For further reading, see Burk and Lemley, Signposts or Fence Posts. Is Eligible

As it turns out, and contrary to critics, lawyers are pretty good at understanding whether claims are patent-eligible. Prosecutors were right about eligibility around 67% of the time; litigators fared a little more poorly, getting it right 60% of the time.[2. Interestingly, some litigators were significantly better than 60%; others were worse. Prosecutors did not show a significant difference in results that would suggest some are better than others. Significant here is used in the statistical sense.] That’s not too bad, right?[3. I took the test for fun—it’s available at https://stanforduniversity.qualtrics.com/jfe/form/SV_efVRMDNDTrHUNxj – and got 3/4 right. Technically I got 4/5 right, but I recognized one of the cases so I won’t claim it as part of my record.]

Now consider that survey respondents didn’t have the specificationThe section of a patent that provides a description of the invention and the manner and process of making and using it. It must enable the "person having ordinary skill in the art" to reproduce and use the invention without undue experimentation. A patent's claims are interpreted in light of the specification. of the patent.

Or the priority date.

Or any other context for the patent.

And that they spent, on average, around a minute per claim.

A minute per claim and you get eligibility right two thirds of the time. That’s hardly incomprehensible, impossible to understand, or impossible to apply consistently.

“Attorneys might be more worried about Alice’s scope than they should be”

That’s a direct quote from the article, and one I agree with. This is even reflected in the data. Attorneys were significantly more likely to be wrong by guessing ineligible on an eligible claim than vice versa. This suggests that attorneys are pessimistically biased to think that eligible patents are ineligible.

That might just help explain why some critics think Alice is a bigger problem than it actually is.[4. As previously posted on Patent Progress, it’s also simply not that significant of a problem numerically—§ 101 rejections have not become significantly more common since the Alice decision.]